The dark side of social media platforms has once again come into the spotlight, as a recent investigation by the Wall Street Journal (WSJ) in collaboration with researchers from Stanford and the University of Massachusetts Amherst reveals a distressing truth. Instagram’s recommendation algorithms have inadvertently fostered a “vast” network of pedophiles seeking illegal underage sexual content and activities. Unlike platforms dedicated to illicit content, Instagram not only hosts these activities but actively promotes them through its algorithms. This revelation underscores the urgent need for stronger measures to safeguard vulnerable users, particularly children, from online exploitation.

Instagram’s Response and Acknowledgment

The parent company of Instagram, Meta, has responded to the allegations, stating that it is actively working to combat such behavior. Meta acknowledges that it received reports of child sexual abuse but failed to act on them due to a software error, which has since been addressed. In addition to setting up an internal task force to investigate the claims, Meta claims to have provided updated guidance to content reviewers and invested in technology to prevent predators from finding or interacting with teens on their platforms. The company emphasizes its commitment to fighting child exploitation and supporting law enforcement efforts to bring criminals to justice.

The Scale of the Network and Technical Challenges

Determining the precise scale of the pedophile network on Instagram is challenging due to technical and legal hurdles. The WSJ report cites the Stanford Internet Observatory’s research team, which identified 405 sellers of “self-generated” child-sex material using hashtags associated with underage sex. These accounts, purportedly run by children themselves, often link to off-platform content trading sites. The report also highlights data from network mapping software Maltego, which found that 112 of these accounts collectively amassed 22,000 unique followers.

Algorithmic Promotion and Content Recommendations

Instagram’s algorithms play a significant role in connecting pedophiles and guiding them to content sellers. Explicit hashtags related to child exploitation allow users to search for illicit material, leading them to accounts that advertise child-sex content for sale. Test accounts set up by researchers were immediately bombarded with recommendations for child-sex-content sellers and buyers, as well as accounts linking to off-platform content trading sites. The ease with which such content proliferates highlights the need for stricter regulation and algorithmic control.

Disturbing Content and Invitations for In-Person Meetups

The investigation further uncovered accounts on Instagram that invite buyers to commission specific acts involving children. Some accounts even provide menus with prices for videos depicting children harming themselves or engaging in sexual acts with animals. Shockingly, the Journal report reveals that, at the right price, children are made available for in-person meetups. These findings underline the urgent need for comprehensive intervention and preventative measures.

Comparison with Other Platforms

While Snapchat and TikTok do not appear to facilitate networks of pedophiles seeking child-abuse content to the same extent as Instagram, the investigation identified 128 accounts on Twitter offering to sell child-sex-abuse material. However, Twitter did not recommend such accounts to the same degree as Instagram, and the platform reportedly removed such accounts more swiftly.

The revelations brought forth by the WSJ investigation demand immediate action to protect vulnerable users from the horrors of child exploitation. It is essential for platforms like Instagram to enhance their policies, technologies, and enforcement mechanisms to prevent the dissemination and promotion of child sexual exploitation content. Collaboration between technology companies, law enforcement agencies, and child protection organizations is crucial in tackling this issue comprehensively. Transparency, research and development of advanced technologies, and government regulations that strike a balance between privacy and safety are key to creating a safer digital environment for children.

The European Union (EU) is launching investigations into tech giants Apple, Google, and Meta (Facebook’s parent company) over concerns they might not be following a new law designed to promote fair competition in the digital market.

Google Chrome is on the brink of initiating a seismic shift that threatens to reshape the very foundation of the modern internet. The imminent demise…

In a highly anticipated market debut, Reddit stock took off, closing its first day of trading with a staggering 48% surge from its initial public offering (IPO) price of $34 per share. The social media giant’s stock concluded the day at $50.44, propelling its market capitalization to over $8 billion.

In a significant move in Vietnam’s corporate landscape, Vingroup, the country’s largest conglomerate, has completed the sale of a 41.5% stake and other assets in its retail arm, Vincom Retail, for a staggering $1.6 billion. This transaction ranks among the largest mergers and acquisitions (M&A) deals in Vietnam in recent years, underscoring the dynamism of the country’s economy and corporate sector.

The integration of artificial intelligence (AI) into various facets of healthcare has revolutionized patient care, and its impact on surgery is poised to be profound. Leveraging AI’s capabilities to connect, analyze, and predict based on operating room data holds the promise of enhancing surgical efficiency and clinical decision-making. Recognizing this potential, NVIDIA has partnered with Johnson & Johnson MedTech to explore new AI capabilities within the realm of surgery, with the goal of advancing the company’s connected digital ecosystem for surgical procedures.

Apple is reportedly in discussions with Alphabet, Google’s parent company, regarding the licensing of Google’s “Gemini” artificial intelligence (AI) training model for integration into iPhones.

An Illinois jury has delivered a significant verdict in a case involving Mead Johnson’s Enfamil baby formula, ordering the company to pay $60 million to the mother of a premature baby who died from an intestinal disease after consuming the formula.

In the fast-paced world of software development, innovation is the name of the game. Cognition, a leading tech company, has introduced a groundbreaking solution that promises to transform the industry as we know it: Devin AI, the world’s first AI software engineer. Devin AI is not just another tool in the developer’s toolbox; it’s a game-changer that leverages the power of artificial intelligence and machine learning to automate coding tasks and streamline the development process.

When it comes to acing a job interview, there are certain tactics that can help you stand out and impress prospective employers. From showcasing your passion for the role to demonstrating problem-solving skills, there are plenty of strategies to employ. However, it’s equally important to be mindful of phrases that could raise red flags during the interview process.

The ongoing saga between the United States and ByteDance, the Chinese tech giant behind the popular social media platform TikTok, has taken another turn. With the House approving a bill requiring ByteDance to divest TikTok within roughly six months, the possibility of a forced sale or effective ban looms large.

-

Cultural differences between the East and the West

Cultural differences between the East and the West -

Vietnam received recognition in the 29th World Travel Awards in numerous categories

Vietnam received recognition in the 29th World Travel Awards in numerous categories -

Vietnamese dishes that fascinate foreigners

Vietnamese dishes that fascinate foreigners -

First NFT vending machine in the world is now operational in New York

First NFT vending machine in the world is now operational in New York -

The LEGO Group today began construction on a new $1 billion factory in Binh Duong, Vietnam

The LEGO Group today began construction on a new $1 billion factory in Binh Duong, Vietnam -

19 DeFi Startups To Watch

19 DeFi Startups To Watch

Google Maps to revolutionize navigation with Satellite features, eliminating dead zones

East Asia’s Growth Outpaces Global Average Amidst China’s Economic Challenges, Says World Bank

Google Maps to revolutionize navigation with Satellite features, eliminating dead zones

EU Probes Apple, Google and Meta for Potential Violations of New Digital Law

Exploring the Enchanting Wonders of Hawaii- A Journey to Remember

Vibrant Activities in Ha Nam Cultural and Tourism Week 2023

Exploring the Enchanting Wonders of Hawaii- A Journey to Remember

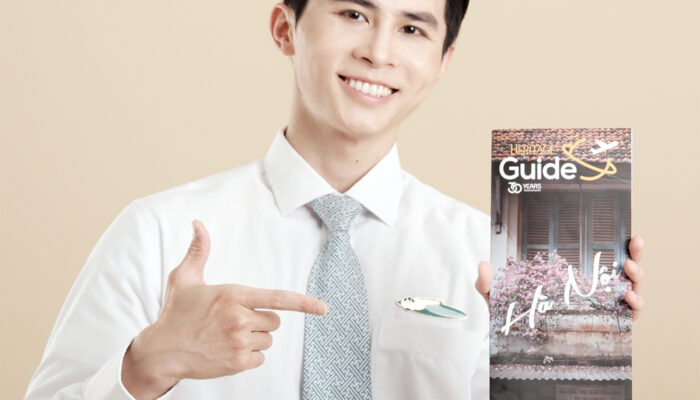

Unveiling the Heritage Guide: Vietnam Airlines’ Exquisite Travel Companion for Exploring Vietnam

Thomson Medical Group Expands Southeast Asian Presence with Acquisition of FV Hospital in Vietnam

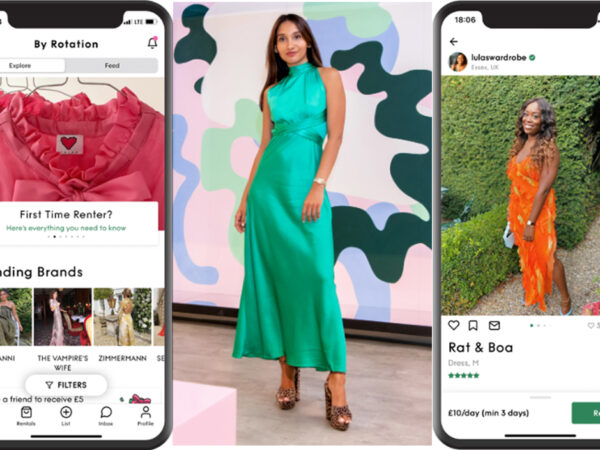

By Rotation: The Startup Revolutionizing Fashion with Peer-to-Peer Clothing Rental

NVIDIA and Johnson & Johnson MedTech’s AI Collaboration Revolutionizing Surgery

Google Maps to revolutionize navigation with Satellite features, eliminating dead zones

Valentino Joins the Metaverse: An Exciting Partnership with UNXD

Coinbase Faces Technical Woes Amidst Bitcoin Surge to $64,000

Coinbase Faces Technical Woes Amidst Bitcoin Surge to $64,000

Exploring the Enchanting Wonders of Hawaii- A Journey to Remember

Abode: Artist Challenges Adobe’s Dominance with Lifetime Creative Software Suite

By Rotation: The Startup Revolutionizing Fashion with Peer-to-Peer Clothing Rental

Threads App’s Rollercoaster Ride: Plummeting 82% of Users Raise Concerns for Meta’s Social Experiment

Job Interview Red Flags: Phrases to avoid according to a former Google recruiter

Exploring the Enchanting Wonders of Hawaii- A Journey to Remember

Vibrant Activities in Ha Nam Cultural and Tourism Week 2023

Thomson Medical Group Expands Southeast Asian Presence with Acquisition of FV Hospital in Vietnam

By Rotation: The Startup Revolutionizing Fashion with Peer-to-Peer Clothing Rental

- Google Maps to revolutionize navigation with Satellite features, eliminating dead zones - April 22, 2024

- East Asia’s Growth Outpaces Global Average Amidst China’s Economic Challenges, Says World Bank - April 4, 2024

- EU Probes Apple, Google and Meta for Potential Violations of New Digital Law - March 27, 2024